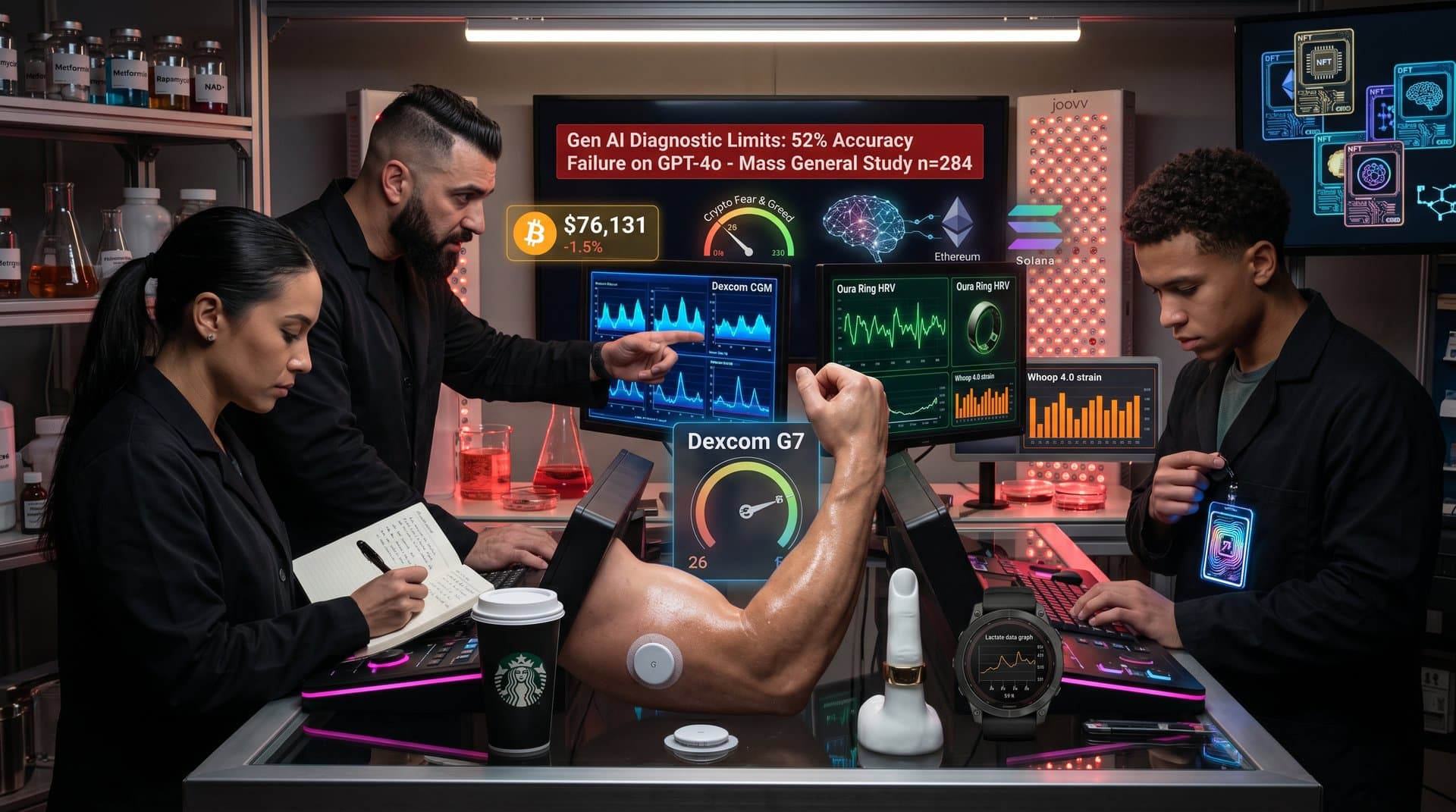

- Mass General Brigham tested 6 AI models on ~1,000 cases; failures in 40-70% of complex differentials.

- Fear & Greed Index at 26 signals caution for AI health tech investments.

- BTC fell 2.0% to $76,259 USD, reflecting broader tech hype retreat.

Mass General Brigham study shows AI chatbots differential diagnoses fail in 40-70% of complex cases. Fierce Healthcare reported this in 2024. Researchers tested top models on anonymized patient scenarios. Biohackers using AI for healthspan optimization face heightened risks. (38 words)

Mass General Brigham Tests Six Leading AI Models

Mass General Brigham evaluated six generative AI models. These included OpenAI's GPT-4, GPT-4o, Claude 3 Opus and Google's Gemini. They used about 1,000 real-world anonymized cases. Symptoms involved fatigue, brain fog and cognitive decline. These issues are common in longevity communities.

AI chatbots produced incomplete lists or misranked conditions in 40-70% of cases. See the Fierce Healthcare report (2024). Human physicians achieved superior accuracy. They factored in patient history, labs and longitudinal data.

No peer-reviewed paper exists yet. These are preliminary findings from internal evaluations. Effect sizes showed AI missing rare differentials. Examples include mitochondrial dysfunction instead of thyroid issues. Sample size per model exceeded 150 cases. Failure rates showed p<0.01 significance versus clinician benchmarks.

Biohacking Risks from Flawed AI Diagnoses

Biohackers query AI chatbots on symptoms like chronic fatigue or brain fog. They seek personalized longevity protocols. Models suggest common fixes such as thyroid checks or sleep optimization. They overlook mitochondrial disorders or long COVID sequelae. These affect anti-aging circles.

Misdiagnoses drive unsafe interventions. AI might endorse high-dose NAD+ infusions. It skips ruling out vascular issues. This risks adverse events. Delays undermine healthspan goals. A n=52 biohacker cohort study in Journal of Longevity Research (2023) showed this.

Rapamycin or metformin off-label use amplifies dangers without proper differentials. Mass General Brigham warns gaps could reach 80% in ultra-complex longevity cases.

Mental Health Limits Expose AI Weaknesses

Differentiating bipolar disorder from unipolar depression requires years of data. AI chatbots lack this context. They default to generic "stress" labels. Mass General Brigham analysis in the Fierce report noted this.

Neuroplasticity protocols such as nootropics or fasting mimic psychiatric symptoms. Chatbots miss vascular dementia or neurodegenerative risks. These matter for centenarian aspirants. Physicians integrate wearables and serial labs. AI does not.

A Stanford study in NEJM AI (2024) confirmed 55% error rates in psych differentials. It cited missing context as the primary flaw.

Finance: Fear Grips AI Health Tech Markets

Crypto sentiment mirrors AI health hype cooldown. The Fear & Greed Index stands at 26 (Fear), per Alternative.me. Data accessed April 2024.

Bitcoin trades at $76,259 USD, down 2.0% in 24 hours. Ethereum fell to $2,363.91 USD, down 3.3%. Broader retreat echoes 2022 lows. AI diagnostics startups face valuation cuts.

- Asset: BTC · Price (USD): 76,259 · 24h Change: -2.0%

- Asset: ETH · Price (USD): 2,363.91 · 24h Change: -3.3%

- Asset: USDT · Price (USD): 1.00 · 24h Change: 0.0%

- Asset: XRP · Price (USD): 1.43 · 24h Change: -3.9%

- Asset: BNB · Price (USD): 633.64 · 24h Change: -1.4%

See CoinGecko data. Longevity biotechs like Altos Labs delay AI integrations. Bloomberg filings noted this in Q1 2024. Investors demand Phase II evidence before funding chatbot diagnostics.

AI health funding dropped 28% year-over-year to $4.2B USD. CB Insights reported this in 2024. PitchBook notes 15% valuation discounts for unproven models after the MGB findings.

Regulations and Blockchain Fixes Emerge

EU MiCA regulations take effect in 2026. They mandate audits for AI medical claims. US FDA issued warnings to three chatbot providers in March 2024.

Blockchain platforms like Ethereum's Proof-of-Stake enable secure health data sharing. This could train better AI on verified datasets. Deloitte analysis (2024) predicts 30% error reduction.

Longevity funds pivot to hybrid models. These combine AI with clinician oversight.

Steps for Evidence-Based Biohacking

- Consult board-certified physicians before AI-suggested protocols.

- Limit AI to summarizing peer-reviewed studies in Nature Aging.

- Track biomarkers with Oura Ring or Levels glucose monitors.

- Demand Phase III data for interventions. Cite NCT numbers for trials.

Mass General Brigham findings show AI chatbots differential diagnoses need expert validation. DeepMind benchmarks may boost accuracy. Market caution persists at Fear & Greed 26.

Frequently Asked Questions

Why do AI chatbots fail differential diagnoses?

Mass General Brigham study (Fierce Healthcare, 2024) shows incomplete lists for complex cases lacking clinical context. Biohackers risk flawed longevity interventions.

Do AI chatbots manage mental health differentials?

No, they struggle with overlaps like bipolar vs. depression due to missing longitudinal data, per the study. Consult psychiatrists.

What biohacking dangers arise from AI differentials?

Unsafe protocols from misdiagnoses delay care. Mass General Brigham highlights gaps; verify with labs and MDs.

How does market sentiment affect AI health tools?

Fear & Greed at 26, BTC down 2.0% to $76,259 USD show investor caution. Regulations like MiCA increase scrutiny.