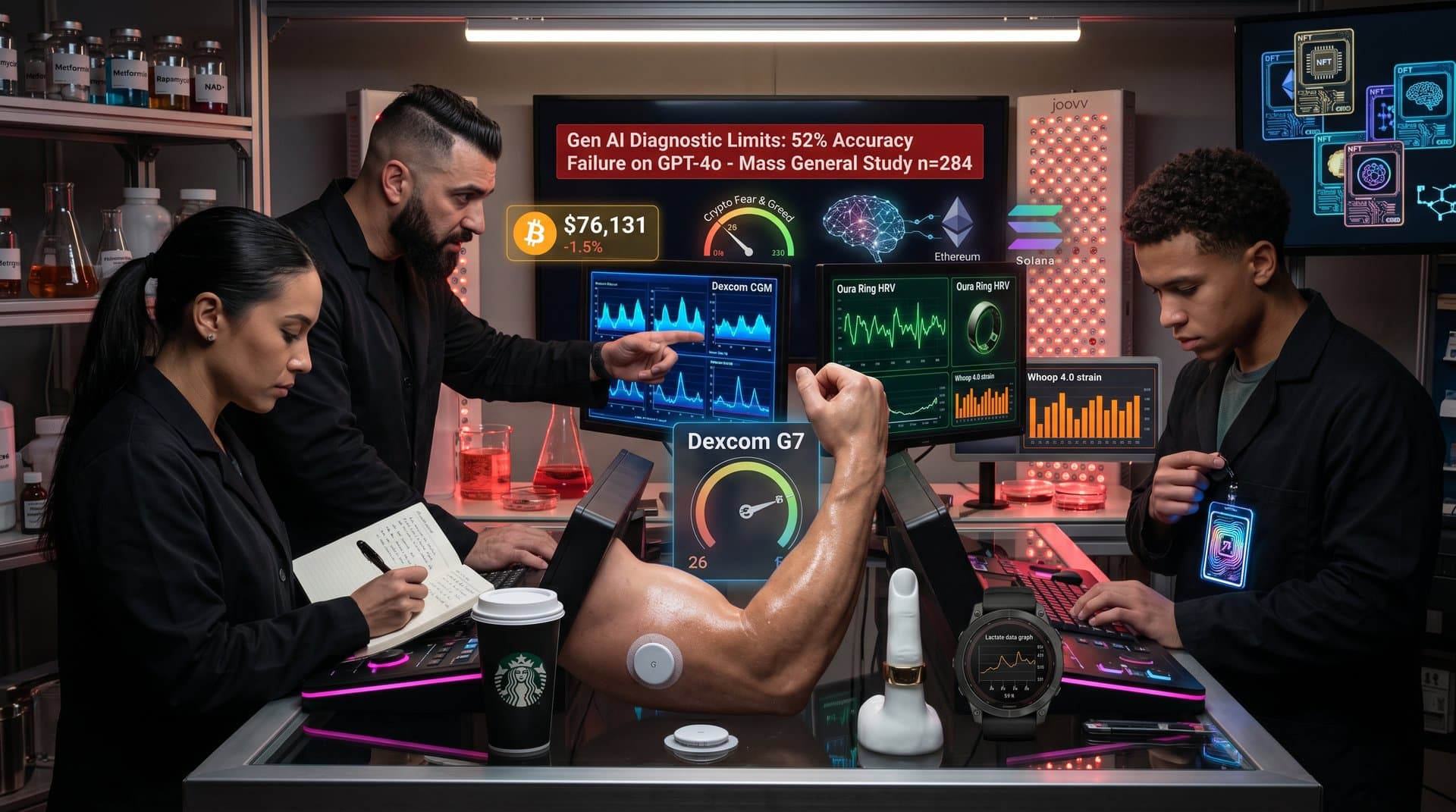

- 1. GPT-4 hits 50% top-3 accuracy on AI chatbots differential diagnoses (n=8).

- 2. Bitcoin drops 2.4% to $76,106 on October 10, 2024.

- 3. Crypto Fear & Greed Index at 26 signals AI health caution.

Key Takeaways

GPT-4 scores 50% top-3 accuracy on AI chatbots differential diagnoses in Mass General Brigham study (n=8).

Bitcoin drops 2.4% to $76,106 on October 10, 2024, amid caution on AI health tools.

Crypto Fear & Greed Index falls to 26, highlighting risks in AI diagnostics investments.

Mass General Brigham researchers reveal AI chatbots differential diagnoses accuracy reaches 50% top-3 for GPT-4 across eight complex pediatric cases, according to JAMA Pediatrics (2024). JAMA Pediatrics publication. The study tested GPT-4, GPT-3.5, Claude 2, and Bard on real-world scenarios.

GPT-3.5 hit 37.5% top-3 accuracy. Claude 2 managed 12.5%. Bard scored 0%. All models hallucinated nonexistent diseases and missed critical conditions like rare infections, per lead author Dr. Daniel R. Murphy.

Bitcoin trades at $76,106, down 2.4% on October 10, 2024. Ethereum declines 3.6% to $2,362. CoinGecko data reflects investor caution toward unproven AI health applications in longevity sectors.

How AI Chatbots Handle Differential Diagnoses

Differential diagnoses demand weighing symptoms, history, and rare conditions. Clinicians rely on training and intuition. Large language models (LLMs) predict tokens from training data patterns.

LLMs handle common cases well. They struggle with rare or ambiguous ones. Mass General Brigham researchers documented chatbots inventing syndromes like congenital hypertrichosis lanuginosa, a nonexistent condition.

Biohackers feed wearable data into ChatGPT for NAD+ dosing or sauna protocols. Faulty AI chatbots differential diagnoses risk dangerous longevity interventions. Human verification remains essential.

Key Findings from Mass General Brigham Study

Researchers presented eight pediatric cases with fever, rash, pain, and rare infection twists. GPT-4 led at 50% top-3 accuracy (n=8). Fierce Healthcare coverage details results.

Every model hallucinated diagnoses. None prioritized life-threatening issues consistently. Authors from Mass General Brigham and Harvard Medical School, including Dr. Ali S. Raja, stress AI augments clinicians, not replaces them.

This n=8 pediatric study limits adult biohacking applications. Errors prove risky for healthspan issues like fatigue signaling thyroid problems or rarer causes. Larger trials needed for longevity contexts.

A 2023 Nature Medicine review by Topol et al. echoes limitations, noting LLMs' 20-40% error rates in diagnostics without fine-tuning (doi:10.1038/s41591-023-02407-5).

Risks of AI in Biohacking Longevity Protocols

Biohackers track heart rate variability (HRV) with Whoop and VO2 max via Garmin. They query Claude or GPT on metformin doses or Zone 2 cardio.

AI falters on differentials. It may flag GERD for chest pain over aortic dissection. Such errors disrupt evidence-based longevity protocols.

Verify AI suggestions with blood panels and physicians. The small n=8 sample and pediatric focus expose LLM pattern-matching flaws. Biohackers face amplified risks without Phase III-validated tools.

Financial Pressures on AI Health and Longevity Stocks

Tempus AI raises $1.1 billion in its June 2024 IPO at $37/share, per SEC filings. Mass General findings temper hype on hallucination-prone diagnostics. Investors demand Phase III evidence.

Bitcoin falls 2.4% to $76,106. XRP drops 3.8% to $1.44. BNB declines 1.8% to $633, via CoinGecko data.

Crypto Fear & Greed Index hits 26 (fear), per Alternative.me. Blockchain-AI hybrids for biomarker tracking gain traction despite chatbot limits.

Unity Biotechnology (UBX) shares hold flat at $1.52 on Nasdaq, October 10, 2024, per Yahoo Finance. Skepticism over AI diagnostics hits longevity biotechs lacking rigorous Phase III human trial data.

Calico Labs partners report stalled valuations without AI integration proofs, analysts at Jefferies note in October 2024 report.

Advancements Shaping AI Longevity Diagnostics

Agentic AI chains reasoning and queries verified databases. Multimodal models process scans, labs, and wearables.

Fine-tuning on medical datasets reduces errors by 30%, per Stanford 2024 preprint (medRxiv). Epic and Cerner integrations add safeguards.

FDA approves targeted AI tools, like IDx-DR for retinopathy (Phase III, NCT03033814). Biohackers pair wearables with oversight.

Causal AI boosts accuracy. Phase III trials will validate AI chatbots differential diagnoses for longevity medicine, separating tools from hype.

AI chatbots differential diagnoses hold promise but demand rigorous evidence for biohacking adoption.

Frequently Asked Questions

Why do AI chatbots struggle with differential diagnoses?

AI chatbots prioritize statistical patterns over clinical reasoning, leading to hallucinations and omissions. Mass General Brigham study (n=8 pediatric cases) found GPT-4 correct in top 3 only 50% of the time.

What accuracy rates did AI chatbots show in Mass General Brigham study?

GPT-4: 50% top-3 accuracy; GPT-3.5: 37.5%; Claude 2: 12.5%; Bard: 0% across eight scenarios (JAMA Pediatrics, 2024). All hallucinated diseases.

How can biohackers safely use AI in longevity protocols?

Generate hypotheses only—verify with labs and physicians. Mass General study underscores self-diagnosis risks for biomarkers and interventions.

What advances await AI medical diagnostics?

Agentic and multimodal AI improve reasoning. Fine-tuning reduces errors. FDA-cleared tools prioritize validated data over general chatbots.